By: C S Nakul Kalyan; Asia University

Abstract

The emergence of Artificial Intelligence (AI) has paved the way for the creation of realistic cloned voices, which have been used in fraudulent calls. In this study, we will discuss the growing threat of AI-generated voices in phishing scams, where a multilayered approach is proposed. The framework combines features such as threat modeling, multi-modal feature analysis, and detection of real or fake voice calls. Apart from detecting manipulations in speeches, this framework also employs and implements countermeasure strategies, such as technical defenses, regulatory actions, and public awareness initiatives for these types of manipulated voices in scam calls. in this study, we will go through the usage of this framework, and it also states the potential of AI-generated voice phishing to make the traditional fraud into automated digital fraud.

Keywords

AI-generated Voices, Voice cloning, Deepfake detection. Voice phishing, Scam automation.

Introduction

The rapid development of AI has led to the creation of features such as voice synthesis and cloning techniques. Apart from using these techniques in customer service and entertainment, these technologies provide a new threat, and AI- generated voice phishing has become a problem, allowing attackers to mimic trusted entities and spread misinformation about them. The Voice-enabled AI bots have the capacity to indulge in scam activities such as financial fraud and identity theft, which can be done on their own [1]. These threats have increased, such as open-source technologies for the deepfake creation and voice cloning have made the work easier for attackers to implement voice-based fraud [2][4]. To counter back for these fraudulent activities, in this article, we will go through the multi-modal detection framework that has a combination of threat modeling, multi-modal analysis, and deepfake voice detection, which will be used to determine whether the calls are scams or not [3][5].

Proposed Methodology

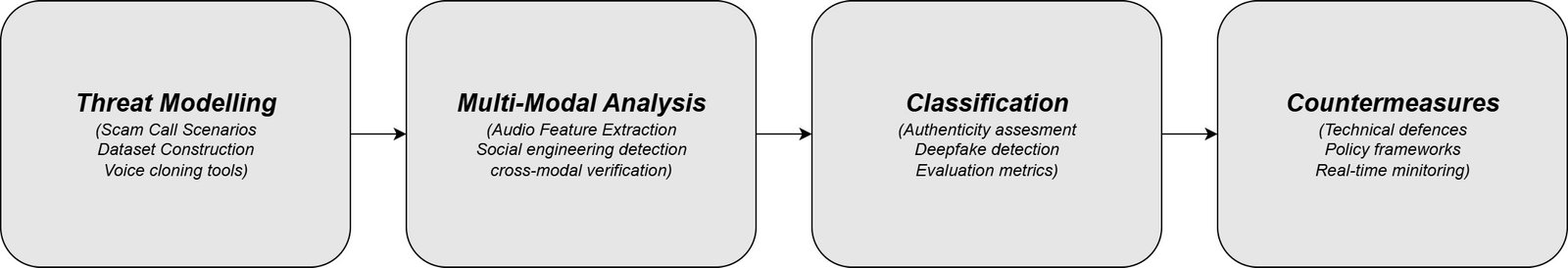

This study proposes a multi-modal framework to detect scam calls that have been generated by AI. This integrated method contains threat modeling, multi- modal analysis, deepfake detection, and countermeasures, which are stated be- low in Figure 1:

Threat Modeling and Data Simulation

Scam call Scenarios

The common scenarios for scam calls will be done in places such as bank account fraud, IRS impersonation scams, crypto-transfer fraud, and identity theft. In real-world cases, attackers will be targeting these domains to get the sensitive information or money.

Dataset Construction

The datasets have been collected from speech datasets such as GRID, TED Talks, and News interviews. and artificially generated scam calls. To clone the Scammer voice from the audio samples, voice cloning tools such as Real-Time Voice Cloning (RTVC), OpenVoice, and F5-TTS were used, which enables the multilingual and cross-accent Voice replication. The Dataset composition and voice cloning tools have been presented in Table 1 below:

Table 1: Dataset Composition and Voice Cloning Tools

Data Source | Sample Count | Duration (Hours) | Language/Accent | Voice Cloning Tool | Purpose |

GRID Corpus | 1,200 | 15.5 | English (British) | RTVC | Baseline legitimate speech |

TED Talks | 800 | 22.3 | English (Multi-accent) | OpenVoice | Natural conversation patterns |

News Interviews | 600 | 12.8 | English (American) | F5-TTS | Professional speech samples |

Synthetic Scam Calls | 2,400 | 18.7 | Multilingual | RTVC + OpenVoice | AI-generated fraud scenarios |

Partially Manipulated | 900 | 8.2 | English + Others | F5-TTS | Hybrid real-synthetic content |

Total Dataset | 5,900 | 77.5 | Mixed | Various | Complete framework testing |

Annotation Process

In the process of annotation, each audio sample has been analyzed and divided into categories such as real, deepfake, and partially manipulated voices. For the accurate analysis, the additional meta data have been annotated, such as language, urgency cues, and contextual content, which will be used to support the analysis process. These annotation results can compare the human and AI-generated phishing strategies.

Multi-modal Feature Analysis

Audio Feature Extraction

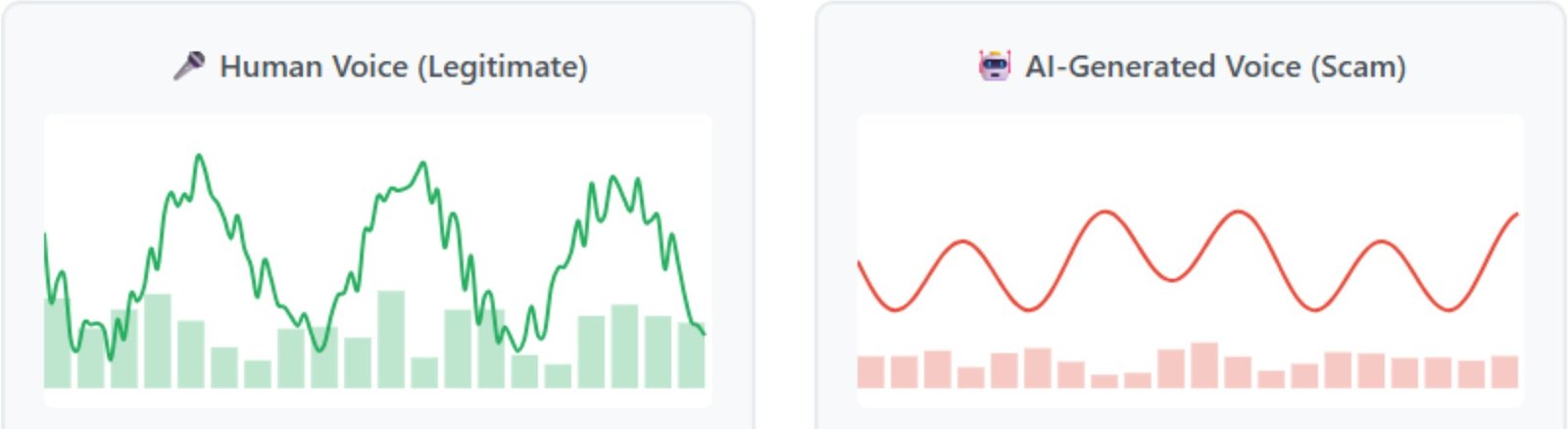

Automatic Speech Recognition (ASR) models were used to process the speech signals and convert the audio into phoneme-level. Here, the patterns such as pitch fluctuation, prosody, and timing irregularities were extracted from the samples. The AI-generated voices will be showing some inconsistencies, such as a monotonous or dull tone of voice or overly smooth transitions, where we can determine whether the voices are real or AI-generated by extracting these patterns. The audio feature comparison for the Real and AI-generated voices has been shown in Figure 2 below:

Figure 2: Audio Feature Comparison

Social Engineering Vector Detection

Here, the verbal and persuasive aspects of the scam calls have been analyzed, where the indicators such as creation of urgency, manipulating emotions, using authority names and demanding, and other personalized scams have been ex- amined [5]. The AI-generated scam scripts have been using traditional phishing attempts, where the fake voices frequently use these types of above-mentioned ways to manipulate others, and it does this with improved coherence and flu- ency.

Cross-Modal Verification

The cross-modal verification is used to combine the audio, contextualize the metadata, and interaction patterns. For ex, a scammer claiming to represent a bank should be cross-validated with the caller ID and the behavioral patterns, and the mismatches in the cross-validation have been identified when there are differences between the fake voice profile and the fake identity which the scammer uses, so we can detect the scammer easily.

Classification and evaluation Framework

Authenticity Classification

With the corresponding extracted features, the audios were classified into three types such as:

Legitimate Communication:

The audio files that contain unique voice and context will be placed under the legitimate communication category.

Suspicious AI-assisted Communication:

The audio files, which are partially manipulated, will be under the suspicious AI-assisted communication category.

Fully AI-generated scam calls:

The deepfake audio files will be placed under this category.

Deepfake Specific detection

This detection framework specifically targets the deepfake indicators, which include:

Overly Smooth Speech Patterns:

The voice samples that lack the human-like disfluencies will be placed under this category.

Multilingual Inconsistencies:

The multilingual inconsistency is said to occur when the sample’s cloned voice tries to attempts the cross-language translation.

Response timing mismatches:

When there is a mismatch, such as unnatural delays or instant replies that are not humanly possible, these cannot be present in natural speech. So these kind of samples will be placed under this mismatching category.

Evaluation Metrics

By utilizing both the metrics, such as standard and scam-specific, the performance has been evaluated here. The standard metrics, which include Accuracy, precision, Recall, and F1-score have been calculated, and scam-specific metrics such as:

Phishing Success Rate Reduction (PSRR):

The phishing success rate reduction is the ability to stop the scams which is going to happening before the victims share Sensitive data.

Voice Clone Identification Rate (VCIR):

Here the fake cloned voices will be correctly identified within the scam scenarios, where the scam call would be detected. The Evaluation metrics are shown in Table 2 below:

Table 2: Performance Evaluation Results

Classification Category | Accuracy (%) | Precision (%) | Recall (%) | F1-Score (%) | PSRR (%) | VCIR (%) |

Legitimate Communication | 94.2 | 92.8 | 95.1 | 93.9 | N/A | N/A |

Suspicious AI-assisted | 87.6 | 85.4 | 89.3 | 87.3 | 78.5 | 82.1 |

Fully AI-generated Scam | 91.8 | 93.2 | 90.7 | 91.9 | 89.2 | 91.5 |

Overall Framework | 91.2 | 90.5 | 91.7 | 91.0 | 83.8 | 86.8 |

Note: PSRR – Phishing Success Rate Reduction; VCIR – Voice Clone Identification Rate

Countermeasure Integration

Technical Defences

Various defense algorithms were integrated into this framework, including Voice spoofing detection systems, real-time caller verification systems, anomaly-based monitoring, etc. These are the technical defenses that have been applied in this integrated framework.

Policy and Regulation

The policy and regulation state the importance of the legal and regulatory interventions, which are taken from international initiatives such as the UK Online Safety Act and U.S legislation addressing the misuse of deepfakes [4].

Conclusion

The AI-generated voices have enabled a new path for phishing attacks by allowing the realistic imitation and scalable automation. For detecting these kinds of forgeries, this article proposed an integrated methodology, which is the combi- nation of threat modeling, multi-modal feature analysis, and authenticity classification, where this framework can support the detection of cloned voices and the manipulation patterns, as well as it can build a strong defense against them, and it provides the technical solutions and regulatory actions against this mis- uses. This study also states the need for proactive defenses against AI-generated voice phishing by bringing together the experimental findings, modalities, and threat analysis, which is done by the integrated tools. Addressing these kinds of difficulties is essential to guard individuals, organizations against the new forms of cyber-enabled fraud.

References

- Richard Fang, Dylan Bowman, and Daniel Kang. Voice-enabled ai agents can perform common scams. arXiv preprint arXiv:2410.15650, 2024.

- Genesis Gregorious Genelza. A systematic literature review on ai voice cloning generator: A game-changer or a threat? Journal of Emerging Tech- nologies, 4(2):54–61, 2024.

- Mumtaz Hussain and Tariq Rahim Soomro. Ai-powered cybercrime: A survey on emerging threats, tools & techniques for countermeasures. In 2024 26th International Multi-Topic Conference (INMIC), pages 1–6. IEEE, 2024.

- Elton Chizindu Mpi. Digital rights: How fraudsters exploit artificial intelli- gence for fraud and deception.

- Jingru Yu, Yi Yu, Xuhong Wang, Yilun Lin, Manzhi Yang, Yu Qiao, and Fei-Yue Wang. The shadow of fraud: The emerging danger of ai-powered social engineering and its possible cure. arXiv preprint arXiv:2407.15912, 2024.

- Sai, K. M., Gupta, B. B., Hsu, C. H., & Peraković, D. (2021, December). Lightweight Intrusion Detection System In IoT Networks Using Raspberry pi 3b+. In SysCom (pp. 43-51).

- Keesari, T., Goyal, M. K., Gupta, B., Kumar, N., Roy, A., Sinha, U. K., … & Goyal, R. K. (2021). Big data and environmental sustainability based integrated framework for isotope hydrology applications in India. Environmental Technology & Innovation, 24, 101889.

- Gupta, B. B., & Gupta, D. (Eds.). (2020). Handbook of Research on Multimedia Cyber Security. IGI Global.

Cite As

Kalyan C S N (2025) AI-Generated Voices in Scam Calls: Phishing Gets a New Voice, Insights2Techinfo, pp.1