By: Chandan Sharma1, Shivang Panwar2

Chandigarh College of Engineering and Technology, Chandigarh, India

Abstract:

As transformer-based AI, exemplified by using ChatGPT, continues to permeate various domains, worries regarding authenticity and explainability are at the upward thrust. It is crucial to enforce sturdy detection, verification, and explainability mechanisms to counteract the ability harms stemming from AI-generated inauthentic content and clinical discoveries. These dangers include the spread of disinformation, incorrect information, and the possibility of generating unreproducible studies consequences. Urgent action is needed to establish and uphold moral requirements, fostering believe and transparency in AI applications. By prioritizing these efforts, this paper will harness the transformative electricity of AI for the advancement of technological know-how and society while mitigating its bad repercussions. Additionally, fostering collaboration among technologists, policymakers, and area professionals is important to expand comprehensive answers that stability innovation with obligation. This collaborative method will facilitate the introduction of effective rules and frameworks to safeguard facts authenticity inside the age of AI, selling a climate of agree with and accountability in the digital panorama.

Keywords: Machine Learning, Generative AI

- Introduction

The speedy advancement of generative synthetic intelligence (AI) technologies, exemplified via transformer-based fashions like ChatGPT, has revolutionized content material advent throughout diverse domain names, from textual content technology to picture synthesis and beyond. While those AI structures demonstrate awesome abilties in producing content material that mimics human-like creativity[1], they also enhance vast worries regarding authenticity and the capability for misuse.

In current years, the proliferation of AI-generated content has delivered to the leading edge the urgent want to guard authenticity and mitigate the potential harms related to the dissemination of misleading or misleading information. The capacity of AI algorithms to create extraordinarily convincing and seemingly genuine content poses profound demanding situations to the integrity of virtual information, threatening to exacerbate troubles which includes incorrect information, disinformation, and the erosion of trust in online communique channels.

As AI continues to conform and permeate various elements of this study lives, addressing these challenges turns into an increasing number of urgent. Ensuring the authenticity of AI-generated content isn’t merely a technical issue however a multifaceted endeavor that calls for a holistic technique encompassing technological, ethical, felony, and societal concerns.

In this context, effective strategies and mechanisms must be devised to affirm the authenticity of AI-generated outputs, mitigate the dangers of misinformation, and sell responsible use of AI technology. These efforts entail growing sturdy detection and verification tools able to discerning among genuine and AI-generated content[8], implementing transparent and accountable practices for content material advent and dissemination, and fostering virtual literacy and important wondering abilties to empower individuals to navigate the complexities of an AI-mediated records landscape.

Furthermore, addressing the demanding situations of authenticity in AI-generated content material

necessitates collaboration and coordination among diverse stakeholders, inclusive of researchers, technologists, policymakers, educators, and civil society organizations. By working together, these stakeholders can expand comprehensive frameworks, suggestions, and standards that uphold the integrity of virtual records and sell moral and responsible AI practices.

In this comprehensive exploration, this paper delve into the complexities surrounding the authenticity of AI-generated content material, inspecting the underlying demanding situations, exploring present day strategies and projects aimed toward mitigating the risks, and presenting strategies for fostering a greater sincere and accountable AI surroundings. Through those collective efforts, this paper purpose to harness the transformative capacity of AI whilst safeguarding the authenticity and integrity of digital information for the benefit of society.

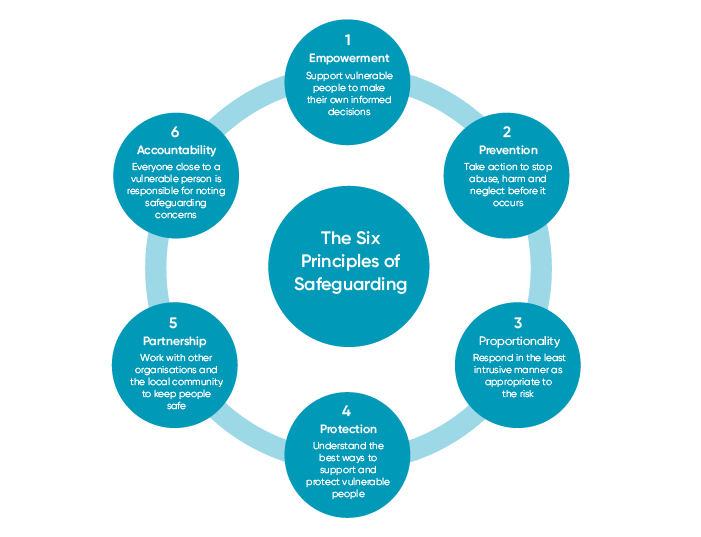

- PERSPECTIVE ON SAFEGUARDING GENUINE SCIENCE:

- Transparency in methodology :

Providing targeted documentation of the information resources, preprocessing strategies, function engineering, version architectures, hyperparameters, and optimization techniques utilized in AI fashions. This transparency allows different researchers to understand and probably replicate the experiments, thereby verifying the authenticity of the outcomes.

- Reproducibility:

Reproducibility in clinical studies features a multifaceted approach geared toward maintaining the robustness and reliability of AI-generated findings. This manner involves now not best the replication of experiments however additionally encompasses diverse associated activities that contribute to the validation and verification of studies outcomes.

One such aspect is the meticulous documentation of experimental processes, such as specified descriptions of records preprocessing steps, model architectures, hyperparameters, and optimization techniques [5]. By imparting complete documentation, researchers allow others to copy the experiments with precision, making sure consistency and accuracy in the acquired effects.

Additionally, reproducibility efforts often contain the sharing of datasets, code repositories, and model implementations with the studies community. Open get admission to to data and code enables transparency and collaboration, allowing different researchers to verify and validate the findings independently. Moreover, it promotes the adoption of quality practices and fosters innovation by permitting the reuse and refinement of existing methodologies.

- Inauthentic content detection:-

The alarming is rise to detect the unauthentic data and fake report. At the present time, many tools are used to detect the authentic data and fake message. Many algorithm are developed in large scale, because many data breaches are taken in the global level[2]. Prior to the advent of generative AI, research of fake news and fake science has already gain significant traction.

- Computational and fact-checking vs human checking:

This section discusses the challenges of verifying results generated by AI systems relative to human testing. It highlights how difficult it is for humans to keep up with AI tools generation size and speed beyond the most accurate and engaging results The text describes an experiment where AI created stories based on specific topics , and delivered a brilliant narrative and language of concrete results in the right terms

No matter how believable the AI-enabled features are, it is impossible for humans to know the actual text of the original book. Part A argues against prioritizing human authentication to validate AI-designed products and instead with computational solutions using existing human knowledge encoded in knowledge bases, gold standard databases[3], and domain-specific ontologies use proposals for Computationally ground truth from these sources Develop and deliver solutions that aim to deliver fact-checking capabilities more efficiently and effectively than relying solely on human experimentation

Overall, it highlights the need for new approaches to fact-checking in the face of AI-driven effects, and recommends the integration of existing computational methods and human knowledge to overcome challenges addressed by the acceleration of AI systems. This section discusses the challenges of verifying the results of AI systems compared to human testing. It focuses on how difficult it is for humans to keep up with the scale and speed of generation of AI tools, compounded by the very realistic and appealing results The text describes an experiment in which AI conducted information based on specific topics, and delivered concrete results in relevant terms and speaks of impressiveness

- Disinformation:

One chance that a few generative AI groups have mentioned is that their systems will likely be exploited for spreading mis- and disinformation (defining the previous as falsehoods and the latter as falsehoods knowingly disseminated to lie to). In its GPT-four technical paper, OpenAI stated that “the profusion of false facts from LLMs—either because of intentional disinformation, societal biases, or hallucinations [7]—has the ability to forged doubt on the complete facts surroundings, threatening this study potential to distinguish truth from fiction.”26 In his Senate testimony, the employer’s CEO, Altman, said that manipulation of electorate in the course of an election yr “is one of my regions of greatest challenge.” More extensively, the amplification of falsehoods will intensify the erosion of consider in political leaders and democratic establishments.

- Benchmarking against baseline:-

Benchmarking towards baselines is a critical step in comparing the best and authenticity of AI-generated findings. This procedure includes evaluating the effects acquired from AI models in opposition to established benchmarks, present literature, or human expert performance to assess their effectiveness and reliability in precise responsibilities or domain names.

By benchmarking AI-generated consequences in opposition to set up standards or performance metrics, researchers can benefit insights into the talents and boundaries of their fashions. This comparative analysis allows for the identification of areas wherein AI fashions excel or fall short relative to current benchmarks or human overall performance stages.

Moreover, benchmarking provides a wellknown reference point for evaluating the performance of AI models across specific datasets, experimental situations, or research methodologies. By organising a commonplace set of evaluation criteria[9], researchers can objectively verify the excellent and consistency of AI-generated findings and evaluate them with consequences received the use of opportunity processes or strategies.

- Description of diagram(as shown in Fig 2):

- Reinforcement learning (RL) requires an agent to make a sequence of decisions in a given situation to maximize cumulative rewards. It learns through trial and error, changing its policies based on feedback. Key components include agents, environments, states, behaviors, rewards, structures, and objective functions, enabling applications in fields as diverse as games, robotics, and manufacturing well

- In reinforcement learning, a reward is a scalar feedback signal from the environment to the agent, which serves as a guide for the agent to determine whether desirable or undesirable behaviors in a given situation are rewarding the immediate cost of the action taken. Rewards can be positive (encouraging certain behaviors) or negative (discouraging certain behaviors), and they affect the agent’s decision-making process to maximize cumulative rewards in the long run

- Machine learning is a subset of artificial intelligence where algorithms learn patterns and make predictions or decisions from data without an explicit framework. It contains training examples of different models to update data in general. Programs cover areas such as image recognition, natural language processing, health care, finance, and intervention programs.

- As an AI language model, I have no power to reward or punish. In the case of reinforcement learning, however, the environment typically provides rewards and punishments based on the agent’s actions Rewards determine the desired outcome and encourage similar actions in the future, period with punishment implying undesirable consequences and deterring further associated behaviors

- Research agenda:-

- Search Technologies: Algorithms and gear are being developed to identify AI-created content and distinguish it from human-created content.

- Verification mechanisms: Establish mechanisms to verify AI-generated products and ensure compliance with ethical requirements.

- Definition Framework: Create a framework for explaining how AI systems are content, to improve transparency and awareness among customers.

- Policy and regulatory interventions: Exploring legal mechanisms and policy interventions to cope with the proliferation of AI-generated content and promote the responsible use of AI[2].

Conclusion

Robust identification technologies, authentication methods, interpretation systems, and implementation systems are needed to protect the authenticity and potential loss of AI-generated content This effort is an important step towards creating transparency, accountability and trust in AI systems and their products. By fostering research in these areas and collaborating with stakeholders across sectors, this paper can promote the responsible use of AI, support ethical standards, reduce the risk of issues inaccuracies, misinformation and changes on the digital media ecosystem as this paper continue to acquire AI technologies it is also important to prioritize authentication methods and techniques.

References:-

- Wu, T., He, S., Liu, J., Sun, S., Liu, K., Han, Q. L., & Tang, Y. (2023). A brief overview of ChatGPT: The history, status quo and potential future development. IEEE/CAA Journal of Automatica Sinica, 10(5), 1122-1136.

- Hamed, A. A., Zachara-Szymanska, M., & Wu, X. (2024). Safeguarding authenticity for mitigating the harms of generative AI: Issues, research agenda, and policies for detection, fact-checking, and ethical AI. IScience.

- Althabiti, S., Alsalka, M. A., & Atwell, E. (2024). Ta’keed: The First Generative Fact-Checking System for Arabic Claims. arXiv preprint arXiv:2401.14067.

- Raval, K. J., Jadav, N. K., Rathod, T., Tanwar, S., Vimal, V., & Yamsani, N. (2023). A survey on safeguarding critical infrastructures: Attacks, AI security, and future directions. International Journal of Critical Infrastructure Protection, 100647.

- Van der Zant, T., Kouw, M., & Schomaker, L. (2013). Generative artificial intelligence (pp. 107-120). Springer Berlin Heidelberg.

- Hamed, A. A., Zachara-Szymanska, M., & Wu, X. (2024). Safeguarding authenticity for mitigating the harms of generative AI: Issues, research agenda, and policies for detection, fact-checking, and ethical AI. Iscience.

- Brundage, M., Avin, S., Clark, J., Toner, H., Eckersley, P., Garfinkel, B., … & Amodei, D. (2018). The malicious use of artificial intelligence: Forecasting, prevention, and mitigation. arXiv preprint arXiv:1802.07228.

- Thiebes, S., Lins, S., & Sunyaev, A. (2021). Trustworthy artificial intelligence. Electronic Markets, 31, 447-464.

- Brundage, M., Avin, S., Wang, J., Belfield, H., Krueger, G., Hadfield, G., … & Anderljung, M. (2020). Toward trustworthy AI development: mechanisms for supporting verifiable claims. arXiv preprint arXiv:2004.07213.

- Law, K. M., Ip, A. W., Gupta, B. B., & Geng, S. (Eds.). (2021). Managing IoT and mobile technologies with innovation, trust, and sustainable computing. CRC Press.

- Li, K. C., Gupta, B. B., & Agrawal, D. P. (Eds.). (2020). Recent advances in security, privacy, and trust for internet of things (IoT) and cyber-physical systems (CPS). CRC Press.

- Mourelle, L. M. (2022). Robotics and AI for Cybersecurity and Critical Infrastructure in Smart Cities. N. Nedjah, A. A. Abd El-Latif, & B. B. Gupta (Eds.). Springer.

- Gupta, B. B., & Srinivasagopalan, S. (Eds.). (2020). Handbook of research on intrusion detection systems. IGI Global.

- Raj, B., Gupta, B. B., Yamaguchi, S., & Gill, S. S. (Eds.). (2023). AI for big data-based engineering applications from security perspectives. CRC Press.

Cite As

Sharma C, Panwar S (2024) Safeguard authenticity for mitigating the harms of generating AI, Insights2Techinfo, pp.1