By; C S Nakul Kalyan; Asia University

Abstract

The rapid development of fake news production has been facilitated by tools such as Deep neural networks, which pose a serious threat to the integrity of information by making it possible to fabricate news and sensitive content. To overcome these threats, this article proposes a multidimensional defensive approach by analyzing social risks. It uses high-accuracy deep learning models for image classification, along with a multilayered framework that uses blockchain technology and adversarial defense to detect generated fake news. This approach ensures that one assesses against deepfakes and GANs by examining the existing ones. Mitigation techniques and a framework have been applied for AI integration with required human oversight in newsrooms to ensure the news which are provided is real. The main goal of this study is to use these combined techniques to protect the public trust from the widespread fabrication of reality.

Keywords

AI-Generated News, Synthetic media, Deepfakes, Fabricated Reality, Deep Learning, Social Media.

Introduction

Digital media is facing a crisis of authenticity due to the rapid development of synthetic media generation. Using advanced techniques such as deep neural networks and Generative Adversarial Networks (GANs), these AI systems can now generate fully fabricated hyper-realistic content, including complex deep- fakes which is identical to the real contents [7]. This technology of creating fabricated news also threatens journalistic honesty, as the public’s trust in them decreases [1]. Due to these threats produced by AI-generated news reports, we will need a counter-strategy to overcome these disadvantages [5]. By looking at the threats produced by the AI-generated media, this article proposes a coherent multidimensional framework that has been built from the current insights to protect the information ecosystem. The AI-generated media Threats and detection Methods have been shown in table below 1

Table 1: AI-Generated Media Threats and Detection Methods

Threat Type | Generation Method | Detection Technique | Key Reference |

Visual Deepfakes | Diffusion Models, GANs | CNN-based Deep Learning | [3], [7] |

Audio Deepfakes | Voice Synthesis | Bi-Spectral & Cepstral Analysis | – |

Fabricated News Articles | Large Language Models | Multi-layered Framework | [2], [5] |

Political Misinformation | Deepfake Technology | Impact Analysis & Detection | [4] |

Proposed Methodology

The approaches, such as technical detection, ethical governance, and strategic analysis, are important due to the difficulty of AI-fabricated news. The following models and frameworks are important strategies that are the key approaches proposed to deal with the threats produced by synthetic media, which can create realistic images and spread deepfakes.

Deep Learning Model for Image Content Detection

An important method for safeguarding the integrity of journalism uses a high- accuracy deep learning model [3]. This technique is specifically designed to deal with the threat caused by AI-generated images, which are particularly created using the advanced diffusion models which is used in news material.

Architecture and Training

This framework uses a Convolutional Neural Network (CNN) architecture, which is trained on a variety of datasets that include both real and fake images [2]. The complete deep learning workflow detection pipeline has been shown in the figure 1

Objective

The main objective here is to provide a strong, useful tool to news professionals that can detect and flag AI-generated images, and to strengthen media integrity and increase the public trust in visual news reporting [3].

Multi-Layered Combat Framework

Integration of multiple technical and analytical solutions is required here to effectively detect against the fake news generated by Generative AI (GAI) [5].

Adversarial Defense systems

These systems help to build a defense mechanism that can reduce threats and detect changes in patterns provided by fake news generators. This framework will be used to improve the detection techniques by combining adversarial training and Generative Adversarial Networks (GANs).

Source Provenance Confirmation

The Source provenance confirmation makes use of blockchain technology to create a record of news articles, which is essential for checking the authenticity and provenance of information sources and news articles.

Acoustic Signal Analysis

This analysis uses advanced signal processing techniques such as Bi-Spectral and Cepstral analysis to track the audio deepfakes. By using these higher-order statistical features, these techniques can detect the differences between the real and fake voices. The components of the multilayered combat framework have been given in Table 2

Table 2: Multi-Layered Combat Framework Components

Framework Layer | Primary Function | Technology Used | Detection Target |

Adversarial Defense Source Provenance Acoustic Analysis Visual Detection | Pattern Detection & Threat Reduction Authenticity Verification Audio Deepfake Detection Image Classification | GANs, Adversarial Training Blockchain Technology Bi-Spectral & Cepstral Analysis CNN Architecture | Fake News Patterns News Article Origin Synthetic Voices AI-Generated Images |

Impact Analysis and Strategy Scrutiny

This analysis evaluates fundamental systems to build trust, authenticity, and security in online communication and the sharing of information.

Deepfake Impact Analysis

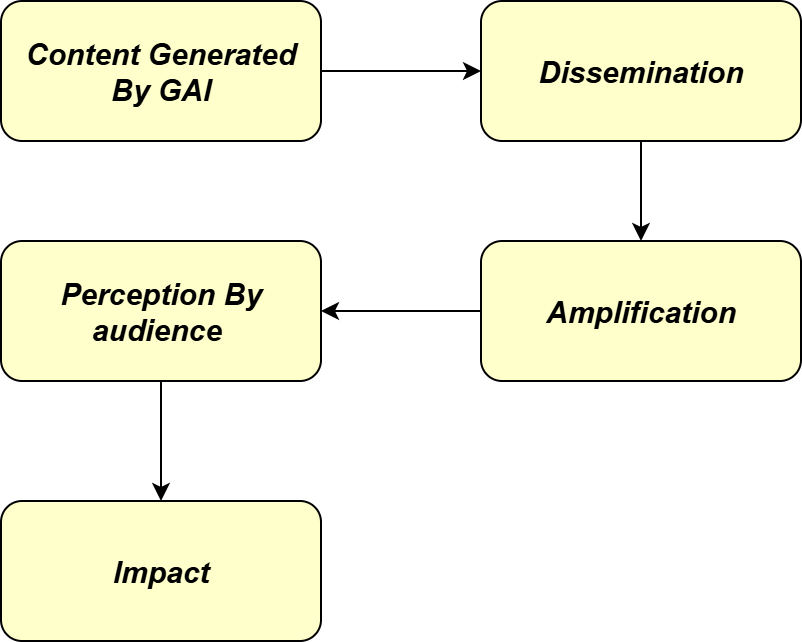

This method will be used to analyze the observable impacts of AI-generated deepfakes on important social pillars such as political disclosure, public opinions, and in social media environments [4]. The below figure 2 below shows the flow from generation to societal Impact:

Strategy Scrutiny

The efficacy and limitations of the deepfake detection techniques have been inspected here, and the main goal of this strategy scrutiny is to detect the important gaps and provide guidance for efficient technical solutions which is needed to protect the integrity of digital media.

Framework for Ethical AI Integration in Newsrooms

This framework acknowledges the responsible and transparent adoption of AI into the news creation and distribution process, providing a method for using this technology responsibly [6]. The Ethical AI integration frameworks in newsrooms have been listed in the below table 3

Table 3: Ethical AI Integration Framework for Newsrooms

AI Application | Function | Risk Level | Ethical Governance Required | Human Oversight |

News Collection | Data Gathering & Aggregation | Low | Basic Guidelines | Periodic Review |

Summarization | Content Condensation | Medium | Quality Standards | Regular Validation |

Fact-Checking | Information Verification | Medium | Accuracy Protocols | Continuous Monitoring |

Content Creation | Article Generation | High | Strict Ethical Standards | Mandatory Human Review |

AI Application Categorization

This approach will do the work such as news collection, summarization, fact- checking, and content creation, and it provides an analysis and classifies the AI tools according to their roles in the newsrooms [6].

Ethical governance

This governance is a central component that marks the ethical issues with algorithmic bias and data privacy. This makes sure that the AI implementation is guided by journalistic standards and human monitoring while forecasting the news.

Explanatory Model for Synthetic Media Threats

The strategy of combining an informational and conceptual framework is done to provide the knowledge to anticipate and manage the threat to important stakeholders, such as IT specialists who will help them to find and control the danger.

Phenomenon Explanation

The primary phenomenon of the model is to identify and detect how deepfakes and Generative Adversarial Networks (GANs) have been used to generate or manipulate content [7]. This is the basic phenomenon of how the model works.

Conclusion

The AI-generated content poses a major threat to information integrity, as it needs necessary swift detection algorithms to detect the real and fake content. This article proposed a multilayered framework system that uses blockchain technology with high-accuracy deep learning algorithms for visual content identification. To reduce the algorithmic bias, these technical approaches have been guided by steadfast dedication, which is been used for the AI and human over- sight. These help to maintain the public’s faith by combining the ethical and technical requirements to change the information environment from being vulnerable to being manipulated to one of strong verifiable authenticity.

References

- Joel Frank, Franziska Herbert, Jonas Ricker, Lea Sch¨onherr, Thorsten Eisen- hofer, Asja Fischer, Markus Du¨rmuth, and Thorsten Holz. A representative study on human detection of artificially generated media across countries. In 2024 IEEE Symposium on Security and Privacy (SP), pages 55–73. IEEE, 2024.

- Hanen Himdi, Nuha Zamzami, Fatma Najar, Mada Alrehaili, and Nizar Bouguila. Arabic fake news dataset development: humans and ai generated contributions. IEEE Access, 2025.

- Tarun Jagadish and S Graceline Jasmine. Detection of ai-generated image content in news and journalism. In 2024 15th International Conference on Computing Communication and Networking Technologies (ICCCNT), pages 1–6. IEEE, 2024.

- Prakash L Kharvi. Understanding the impact of ai-generated deepfakes on public opinion, political discourse, and personal security in social media. IEEE Security & Privacy, 22(4):115–122, 2024.

- Sanjeev Kumar, Siva Sai, Vinay Chamola, Aanchal Gaur, Chitwan Agarwal, Kaizhu Huang, and Amir Hussain. Peeping into the future: Understand- ing and combating generative ai-based fake news. Cognitive Computation, 17(3):103, 2025.

- Sawsan Taha, Rahima Aissani, and Rania Abdallah. Ai applications for news. In 2024 International Conference on Intelligent Computing, Commu- nication, Networking and Services (ICCNS), pages 220–227. IEEE, 2024.

- Lucas Whittaker, Tim C Kietzmann, Jan Kietzmann, and Amir Dabirian. “all around me are synthetic faces”: the mad world of ai-generated media. It Professional, 22(5):90–99, 2020.

- Gupta, B. B., Gupta, S., Gangwar, S., Kumar, M., & Meena, P. K. (2015). Cross-site scripting (XSS) abuse and defense: exploitation on several testing bed environments and its defense. Journal of Information Privacy and Security, 11(2), 118-136.

- Negi, P., Mishra, A., & Gupta, B. B. (2013). Enhanced CBF packet filtering method to detect DDoS attack in cloud computing environment. arXiv preprint arXiv:1304.7073.

- Gupta, B. B., Gupta, S., & Chaudhary, P. (2017). Enhancing the browser-side context-aware sanitization of suspicious HTML5 code for halting the DOM-based XSS vulnerabilities in cloud. International Journal of Cloud Applications and Computing (IJCAC), 7(1), 1-31.

Cite As

Kalyan C S N (2025) AI-Generated News Reports and Obituaries: Fabricating Reality Through Media, Insights2Techinfo, pp.1