By: Gaurav Chahal, Department of Computer Science & Engineering, Chandigarh College of Engineering and Technology, Chandigarh(160019) / Email: chhlgrv@gmail.com

Abstract

The IoT device boom has led to an overflow of highly sensitive and decentralized data in the world. Standard approaches to machine learning demand that all the collected data is transmitted to the server located in the cloud, resulting in a highly inefficient and privacy-violating approach. In contrast, federated learning allows a device to train a sophisticated model locally without transferring any raw data, but rather transmitting only the encrypted changes to the central server. Your data remains invisible, yet the system continues learning. This work provides a comprehensive overview of the fundamental aspects of federated learning, including its architectural details, benefits in terms of privacy, real-life examples of implementation, and open research questions in the field. Utilizing recent advancements in distributed deep learning and privacy-preserving AI, federated learning is presented as an essential component of the modern IoT ecosystem.

Keywords: IoT, Federated Learning, Privacy Preservation, Edge AI, Distributed Learning, Federated Averaging, Security, Differential Privacy

1. Introduction

When discussing technology today, one cannot ignore the IoT phenomenon. There are billions of devices ranging from wearables such as watches and fitness trackers, up to autonomous vehicles and robotic machinery which continuously transmit data. Machine learning (ML) is one of the most promising applications that can be used in conjunction with IoT. Nevertheless, all this data is highly confidential in nature as it consists of personal information related to individual’s health, location, behavior, transactions and much more[1].

Traditionally, the process was straightforward – collecting all data from all devices and sending it to the cloud to run enormous ML models in order to gain overall understanding of what is happening. However, several major drawbacks were identified. First of all, there will be no privacy anymore – should the attacker manage to get access to the server or the Internet, he will have access to everything. Second, the speed is reduced. For example, if anomaly detection has to be done for equipment of some factory, it would be impossible to wait for some distant server to respond. Finally, network usage skyrocketed..[2].

Federated Learning (FL): In Federated Learning, the laws of the game have been completely rewritten. Think of a classroom situation where the student (which is your IoT device) learns all by themselves and takes notes accordingly. Rather than sending their entire note down to the teacher (the aggregation server), each one makes the most out of their note and then sends the summary to the teacher who then develops an optimal summary using all the summaries sent by the students. [3].

2. Privacy & Security Threats in IoT

Centralized ML isn’t just inefficient, it’s risky. Here’s why:

Data Leakage & Inference Attacks: Once the data reaches the cloud, any hacker can easily get hold of it or deduce personal information from the model’s output. It takes only one data leak for the entire database to be compromised [2].

Model Inversion Attacks: Even if attackers never see the raw data, it’s sometimes possible to recreate sensitive info by poking at the model’s predictions models often “remember” patterns from the data they were trained on. [7].

Data Poisoning: Even when attackers cannot view the actual data, it may be possible to reverse engineer some of the sensitive information by probing the model’s outputs. Models “remember” patterns in the training data.

Single Point of Failure: Depending on a single central server is dangerous; if it goes down or is breached, everyone loses [6]

Regulatory Pressure (GDPR/CCPA): Fresh regulations such as GDPR and CCPA require us to reduce and manage private information. Centralized storage of information won’t do anymore [7].

3. Federated Learning: Concept & Workflow

Federated learning is the entire idea of performing machine learning algorithms across multiple devices without compromising any information on each individual device. Mathematically, this concept refers to each device attempting to optimize the same global model through the use of its local data. This is achieved by minimizing the total loss function based on the amount of data available for each individual device. The mathematical equation for federated learning involves optimizing the following global loss function F(w), which is a weighted sum of local loss functions from K devices:

F(w) = Σk=1K (nk/n) · Fk(w)

where n is the total data samples, n_k is the local dataset size of device k, and F_k(w) is the local loss.

Therefore, rather than one central server that gathers all the gradients from a large amount of data, each node computes gradients independently and sends off only the update. It’s almost as if the gradient itself is a hint, telling us what improvements are needed, but without revealing the information behind the data.

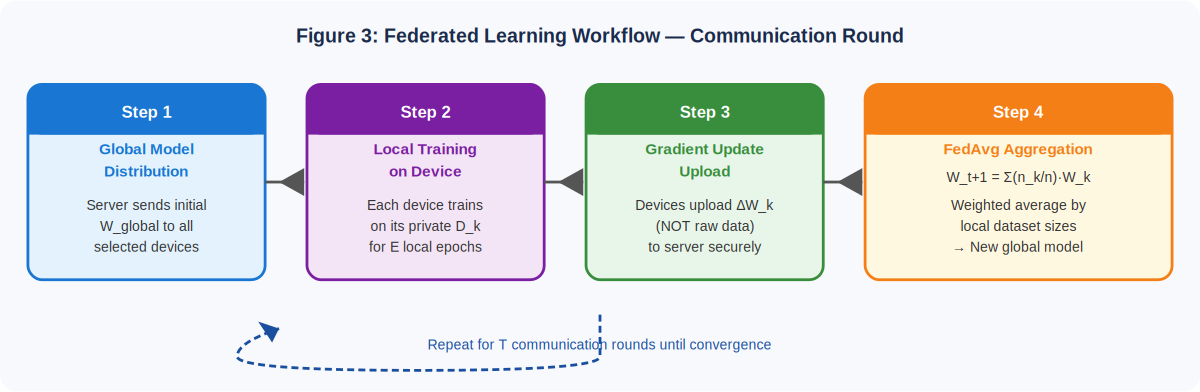

The workflow consists of four phases that are logically connected:

Local Training: Each device trains the model using its own data. Private and sensitive information never leaves the device in which it is located, hence end-to-end privacy.

Sharing of Model Parameters: Only the updates of the parameters and/or gradients are shared from devices to the server without sharing the original data. This significantly reduces the size of the message.[4]

Federated Averaging (FedAvg): The updates are gathered by the server, and they are averaged together, putting more weight on the bigger datasets. Thus, the optimized model will be unbiased towards any specific group. [9][3]

Redistribution of the Global Model: The improved global model goes back to all devices where it keeps being trained till it is good enough to perform the task.

Every part of FL is intentional: local training means privacy, update sharing saves network resources, FedAvg keeps things fair, and redistribution spreads knowledge without ever exposing anybody’s full dataset.[7].

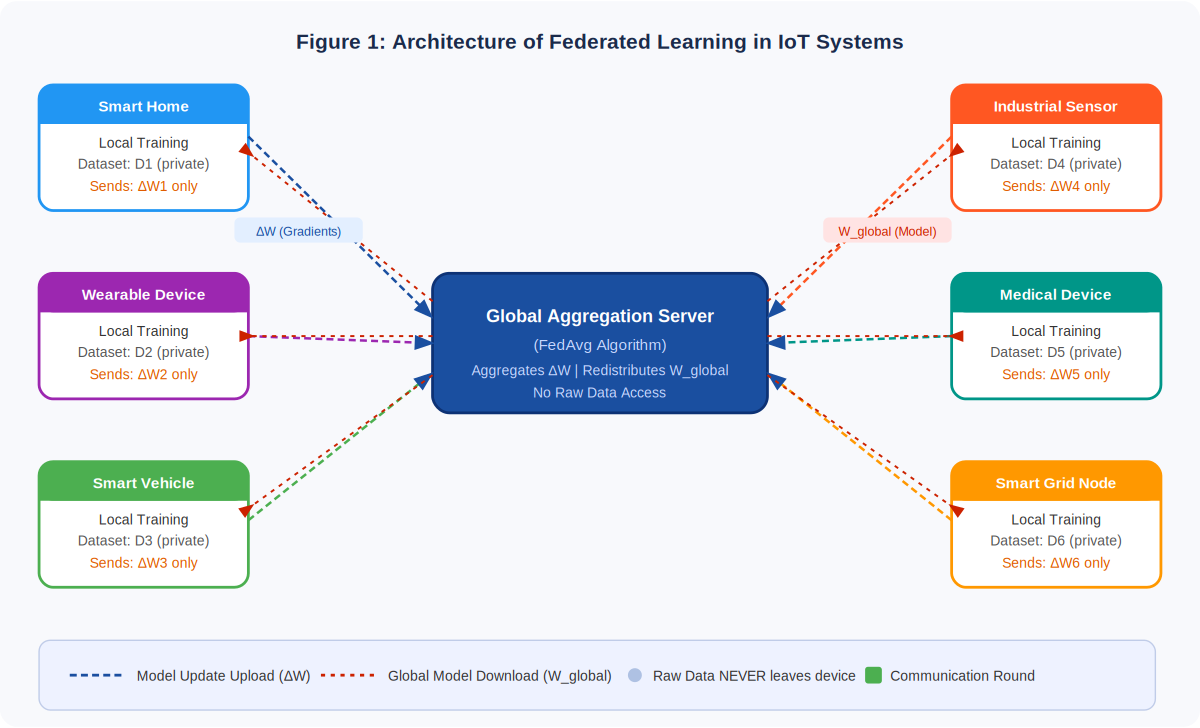

FL Architecture in IoT Systems

There are three key elements in IoT systems using federated learning, usually structured in a layered approach to edge computing framework [1]:

Layer of Edge Devices: Consists of IoT devices that conduct local processing and modeling.

Layer of Communication Protocols: Facilitates secure and efficient exchange of updates between devices and the central server.

Layer of Aggregation Server: Facilitates aggregation of local updates and distribution of updated models.

This framework is compatible with contemporary edge-fog-cloud computing structures that allocate computation tasks in different layers to increase efficiency [1].

6 Comparison with Centralized Architecture

Centralized ML will always require all the data to be sent from source to destination at some far-off cloud. This means excessive bandwidth usage, sluggishness in response, and most importantly, increased privacy risks since the data is at risk each step of the way. FL does a complete 180 on that front. No movement of data whatsoever; just send the updated model weights instead. Where centralized ML focuses on sending data upwards, FL focuses on sending out model weights.

Table: Centralized ML vs. Federated Learning – Comparative Analysis

Aspect | Centralized ML | Federated Learning |

|---|---|---|

Data Location | Stored in central cloud server | Remains on local device |

Privacy Risk | High – raw data exposed | Low – only gradients shared |

Latency | High – round-trip to cloud | Low – local computation |

Bandwidth | Very high – full dataset transfer | Moderate – model updates only |

Single Point of Failure | Yes – critical vulnerability | No – distributed resilience |

GDPR Compliance | Difficult – centralized storage | Easier – privacy-by-design |

Scalability | Limited by server capacity | Highly scalable (edge nodes) |

7. Frameworks & Real-World Applications

Google Gboard: Likely the most well-known use case for FL. Your mobile keyboard will become better at predicting words through learning on its own device. Google never gets to see your private conversations because personalization does not compromise privacy.[9]

AI in Healthcare: Hospitals are not allowed to share patient data for ethical reasons. But with FL, they can collaborate on building models for tasks such as cancer detection across several hospitals without breaching privacy [8].

Smart Cities & IoT: There is a huge amount of data generated by smart traffic management, grid networks, and predictive maintenance applications. FL allows local training on these nodes, which means that cities can become smarter and more efficient without transferring critical operational data into a third-party database [2][6].

8. Challenges & Open Research Gaps

Table 1: Key Challenges in Federated Learning for IoT

Challenge | Root Cause | Research Direction |

Communication Overhead | Frequent gradient exchanges | Gradient compression, sparse updates |

Device Heterogeneity | Non-IID data distributions | Asynchronous FL, personalization |

Model Poisoning | Malicious local updates | Byzantine-robust aggregation |

Edge Resource Limits | Low compute/memory on IoT | Model pruning, quantization |

However, several challenges persist:

– Communication Cost: Each training cycle entails the transmission of updates from thousands of devices, leading to high communication costs. Efforts aimed at compression techniques have shown promise, but the challenge persists.

– Heterogeneous Devices: All devices are not created equal and some may have weak connectivity or generate peculiar data, leading to biased learning and slower convergence of models. Async methods and automated personalization are being considered to solve this problem.

– Security Threats: An adversary may attempt to inject malicious updates in the network. Byzantine fault tolerance aggregation is one way to deal with such malicious attacks.

9. Conclusion & Future Directions

In essence, Federated Learning is not a mere enhancement of machine learning, but a new paradigm altogether. Rather than aggregating all data to one central repository, Federated Learning allows for learning by a network of devices using distributed data without revealing it, solving major drawbacks of IoT, including privacy concerns, high latency, and scalability. In addition to addressing the limitations of previous solutions, Federated Learning makes possible the creation of regulated and trustworthy artificial intelligence systems [7].

As far as the future of federated learning is concerned, several key trends can be anticipated. Integration with blockchain may enhance security and allow to track the history of models’ updates. Also, technologies such as differential privacy and secure multi-party computation may be utilized in order to better protect personal data. Moreover, the adoption of federated learning in hierarchical edge AI will allow learning on device, fog, and cloud levels, improving the efficiency of decision-making[1]. Given the importance of privacy and data distribution, federated learning will only grow in prevalence as sensitive applications become widespread, for example in healthcare [5][8]..

Given that the IoT has experienced tremendous growth and AI has become integrated into daily applications, there is an urgent need for privacy-preserving intelligence in order to facilitate effective decision-making. Federated learning has immense potential to be used as a key component in developing future intelligent systems.

10. References

- Singh, R., Singh, S. K., Kumar, S., & Gill, S. S. (2022). SDN-aided edge computing-enabled AI for IoT and smart cities. In SDN-supported edge-cloud interplay for next generation internet of things (pp. 41-70). Chapman and Hall/CRC.

- Briggs, C., Fan, Z., & Andras, P. (2021). A review of privacy-preserving federated learning for the Internet-of-Things. Federated learning systems: towards next-generation AI, 21-50.

- K. Hu et al., “Federated Learning: A Distributed Machine Learning Method,” Springer, 2021.

- Z. Cui et al., “Communication-efficient Federated Learning Models,” IEEE Transactions on Parallel and Distributed Systems (TPDS), 2022.

- B. Thapaliya et al., “Efficient Federated Learning for Healthcare Data,” Nature Digital Medicine, 2024.

- Y. Zhou et al., “Communication-Efficient Federated Learning Framework,” IEEE Internet of Things Journal, 2020.

- P. Kairouz et al., “Advances and Open Problems in Federated Learning,” Foundations and Trends in Machine Learning, 2021.

- Insights2TechInfo, “Deep Learning for Early Identification of Neurodegenerative Diseases,” Insights2techinfo.com, 2024.

- H. B. McMahan et al., “Communication-Efficient Learning of Deep Networks from Decentralized Data,” AISTATS, 2017

- Ho, G. T. S., Lee, C. K. H., Tang, V., Chow, E.W.H. (2025). Enhancing AGV Picking Efficiency: Fuzzy Association Rules Mining in Automated E-fulfillment Centers. In The International Conference for Production Research Asia Pacific Region (ICPR-APR), Hong Kong.

- Ho, G. T. S., Tam, M. M. F., Tang, V., Chow, E.W.H. (2025). A Demand-Driven Holistic Management Approach for Optimizing Pick Face Replenishment and Routing. In International Conference on Intelligent Media and Sustainable Technology Management (IMSTM), Osaka, Japan.

- Ho, G. T. S., Tang, Y. M., Kuo, W. T., & Tang, V. (2025), “Blockchain‐Based Platform for Information Security and Visual Management in Coffee Trading”, Concurrency and Computation: Practice and Experience, 37(25-26), e70299.

Cite As

Chahal G. (2026) Federated Learning for Privacy-Preserving AI in IoT Systems, Insights2Techinfo, pp.1