By: Abhay Pratap Singh, Department of CSE, Chandigarh College of Engineering and Technology, Panjab University, Chandigarh, E-mail:- co23306@ccet.ac.in

Abstract

Quantum variational algorithms (VQAs), which are believed to be the most probable path to achieve quantum supremacy, suffer from an intrinsic scaling barrier known as the barren plateau effect. This is because, with the increase in the depth of quantum circuits needed to solve complex problems, the sensitivity of these circuits to the information loss caused by noise makes them forgetful of all past states. In this article, we will examine in detail how noise, restrictions in circuit connectivity, and algebraic nature result in a featureless landscape. In particular, we will investigate how contraction mappings, linear depth limits, and the curse of dimensionality affect this challenge.

Introduction

The NISQ regime is characterized by machines with substantial qubit numbers but hampered by relatively high noise levels. The variation quantum circuits act as a link here by making use of classical optimizers to optimize quantum circuits for applications like machine learning and chemistry. As depth increases for these circuits, the phenomenon of “noise-induced barren plateaus” (NIBPs) occurs, where the gradient of the cost function vanishes exponentially. The lack of any sensitivity in terms of the trainable parameters results in an optimization problem in which the problem itself has become statistically impossible. It is important to know the reasons behind this to avoid a roadblock in terms of scalability.

The Contractive Nature of Noise Maps

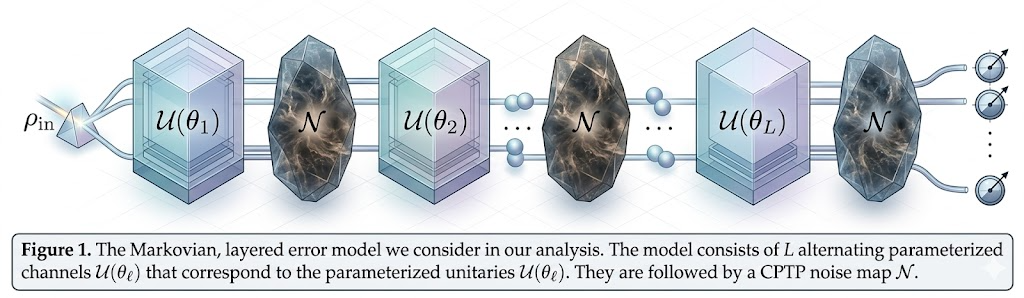

The first factor that makes deep circuits discard their history is called contractivity in the context of mathematics used to describe noise channels. According to Schumann et al. (2024), in an error model with several layers of noise, unitaries are combined with CPTP noise maps. These maps inherently cause quantum states to become indistinguishable due to the contraction coefficient (also called Lipschitz constant) associated with the map. When the noise is strictly contractive, for example, as with amplitude damping channels or specific types of Pauli noise, repeating the noise operation converges to a unique fixed point regardless of the selected parameters. Hence, as the number of layers in a circuit grows, the state forgets its dependence on the initial parameters.

Figure 1: Markovian layered error model showing L alternating parameterized unitary channels U(θℓ) interleaved with CPTP noise maps N, illustrating how repeated noise applications drive the quantum state toward a fixed point and erase historical parameter dependence. (generated using AI Tools)

Hardware Architecture as a Scaffold for Noise propagation

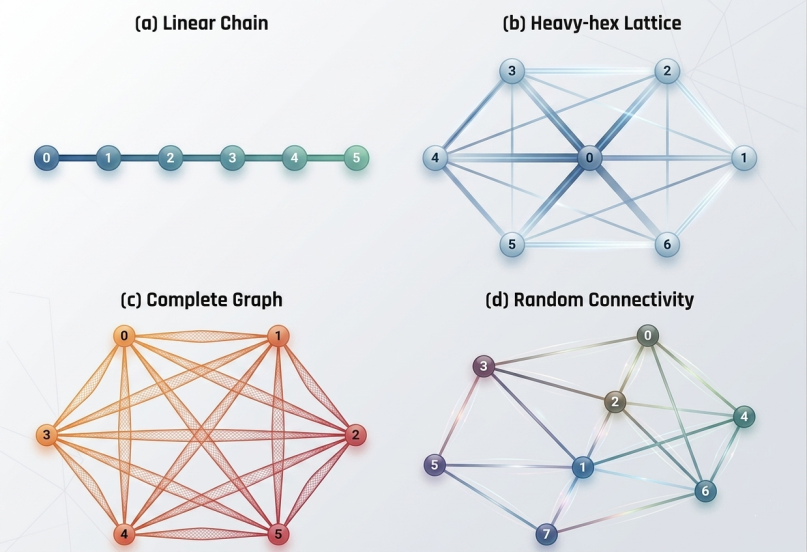

There is no standard loss of information for all components because there are other factors that affect such an occurrence and these include the connectivity topology of the computing device. The quantum computing devices can be presented as a network of interconnections with each component being a qubit and the interconnections representing two qubit gateways (Goyal et al., 2024). Research using machine learning reveals that the superconducting quantum architectures are greatly affected by connectivity factors like betweenness centrality and spectral entropy, while semiconductor quantum dot devices are affected by overall size and number of nodes. Such topology structures determine the way environmental noise is transmitted throughout the system, resulting in the rate of degradation. (Fu et al., 2026)

Figure 2: Four representative quantum hardware connectivity topologies — (a) Linear Chain, (b) Heavy-hex Lattice, (c) Complete Graph, and (d) Random Connectivity — illustrating how architectural structure influences noise propagation and the rate of coherence loss. (generated using AI Tools)

The Taxonomy of Scalability Barriers

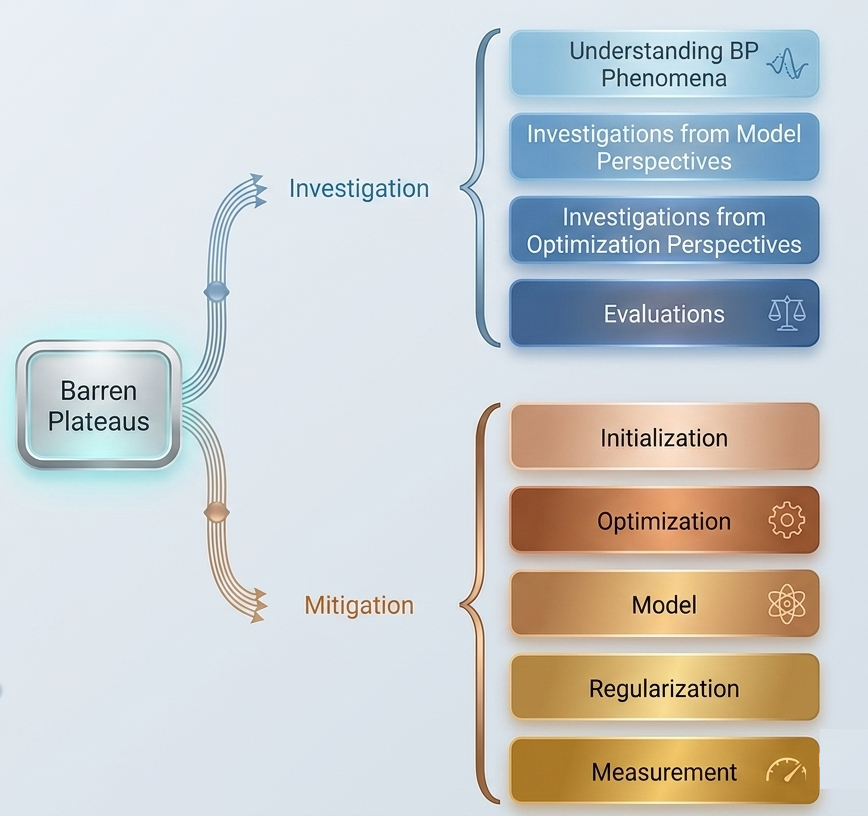

Barren plateaus constitute an all-encompassing problem in quantum computing, which can be classified according to their source into multiple forms. In the case of SBP, high expressivity is seen when the circuit is designed in such a way that it approximates a unitary 2-design, with the result that gradient variance disappears exponentially with the increase in the number of qubits. In addition to expressiveness, measurement locality and initial-state entanglement play roles in determining whether the landscape turns into a barren plateau. Measures taken include unique forms of parameter initialization, such as employing identity blocks and Gaussian distributions, as well as layer-wise learning. Nonetheless, there persists the problem that, as the architecture grows bigger, it turns into a barren “plateau.” (Cunningham & Zhuang, 2025)

Figure 3: Hierarchical taxonomy of barren plateau (BP) research, branching into investigation approaches (model and optimization perspectives, evaluations) and mitigation strategies (initialization, optimization, model design, regularization, and measurement).

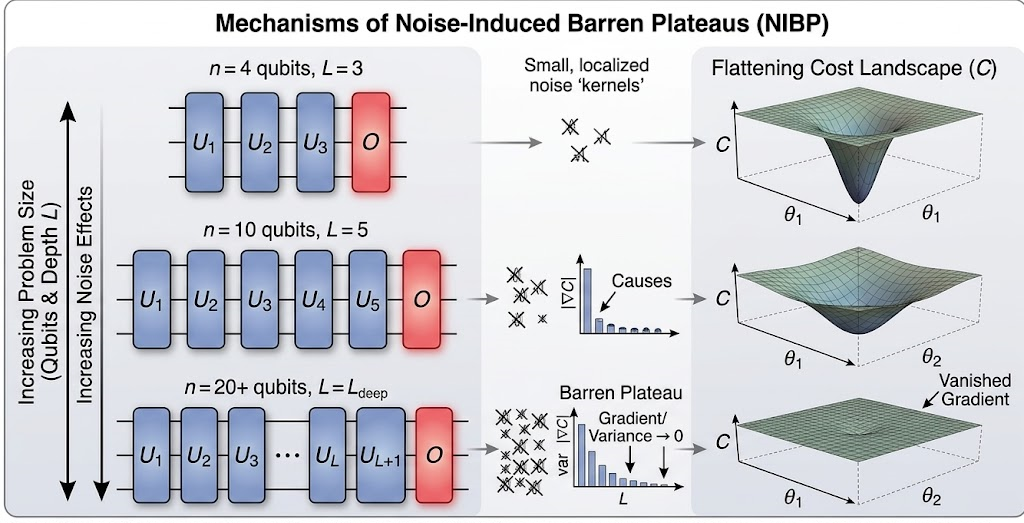

The Linear Depth Barrier and Pauli Noise

An important analytic finding is that the training signal disappears exponentially when the depth of the circuit increases linearly with respect to the number of qubits under local Pauli noise. It is worth noting that this specific form of plateau formation due to noise, called the Noise-Induced Barren Plateau (NIBP), differs from the noiseless case by the fact that it makes the whole space flat, whereas in the absence of noise only the probabilistic parts of the space tend to become flat. Since the gradient decreases exponentially with respect to the circuit depth, an exponential number of shots is needed to estimate the training direction, thus eliminating any quantum advantage. (Wang et al., 2021)

Figure 4 : Mechanisms of Noise-Induced Barren Plateaus (NIBPs) across increasing problem sizes: as circuit depth L and qubit count n grow, localized noise kernels accumulate, causing gradient variance to vanish and the cost landscape C to flatten completely.

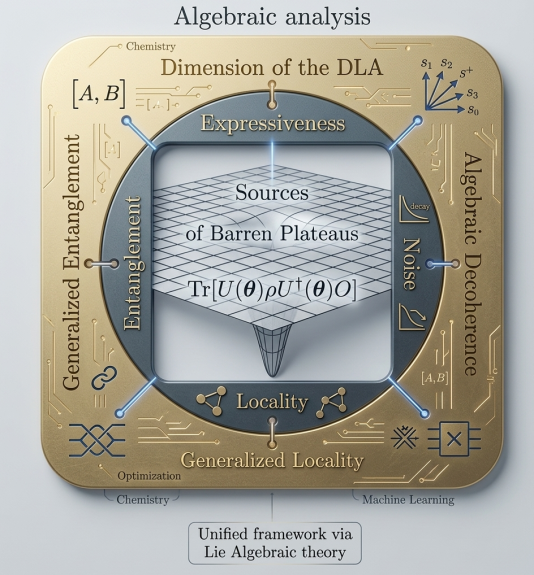

Lie Algebraic Unification of Information Loss

To uncover the underlying cause behind these plateaus, scientists have created a Lie algebraic theory which explains the various causes of information loss. The ability to train a quantum circuit decreases proportionately to the dimension of the Dynamical Lie Algebra (DLA); higher expressivity circuits, which possess larger DLAs, generate a more peaked loss function (Bansal et al., 2026). Moreover, the g-purity is a term used to quantify the generalized entanglement of a state with respect to the algebra. In the presence of noise, there occurs “algebraic decoherence,” where generalized entanglement is generated or generalized locality in the measurement operators is decreased. This theory proves that in case the DLA dimension is exponentially large, the circuit is bound to forget its past and become untrainable irrespective of the initial state or measurement chosen. (Ragone et al., 2024)

Figure 5: Unified Lie algebraic framework for barren plateau analysis, mapping the interplay between the Dynamical Lie Algebra (DLA) dimension, expressiveness, generalized entanglement, algebraic decoherence, and generalized locality — linking chemistry, optimization, and machine learning perspectives. (generated using AI Tools)

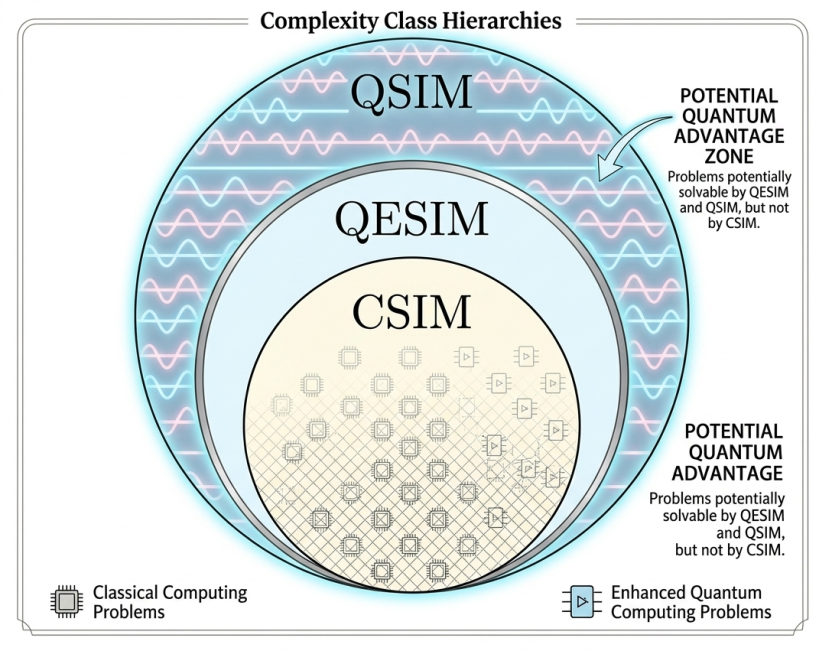

The Curse of Dimensionality and Classical Simulability

One may consider the problem of barren plateaus as being the curse of dimensionality. The reason why certain quantum circuits avoid plateaus is the constraint that they work on polynomial subspaces. Although the fact that such circuits stay inside the small subspaces makes them trainable, this may also make them classically simulable (Singh et al., 2024). In this case, we face another problem of “soft dequantization”: many architectures that do not have any barren plateaus can be well simulated by classical computers, as long as the initial data are obtained using a quantum computer. Thus, the very properties that enable a circuit to keep its history are the same ones that make the classical simulation possible.

Figure 6: Complexity class hierarchy illustrating the relationship between classical simulation (CSIM), quantum-enhanced simulation (QESIM), and full quantum simulation (QSIM), highlighting the two potential quantum advantage zones where QSIM or QESIM outperform classical methods.

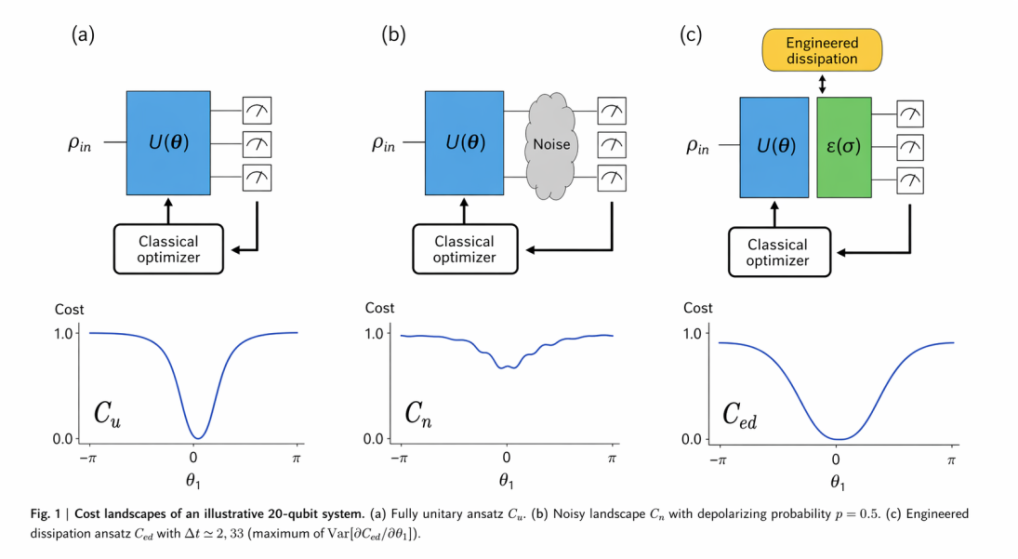

Restoring Signal Through Engineered Dissipation

Whereas noise eliminates historical gradients, engineered dissipation may also function as an enabling component to allow the model to become trainable again. With the incorporation of engineered Markovian losses after each unitary transformation layer, a global loss function can be localized in the sense that it becomes resistant to any issues with barren plateaus associated with a shallow quantum circuit. The use of such non-unitary components enables them to be used as an initialization process where the model begins with low energy compared to the initial state. The experimental study of quantum chemistry indicates that dissipative models are far more convergent than unitary-only models.

Figure 7: Comparison of three variational quantum algorithm (VQA) paradigms and their cost landscapes: (a) ideal unitary circuit C_u, (b) circuit with generic noise C_n causing barren plateaus, and (c) circuit with engineered dissipation ε(σ) producing C_ed — restoring a trainable, well-defined cost landscape.

Conclusion

The problem with deep quantum circuits losing their memory because of noise-induced barren plateaus is the central issue of the NISQ era. The reason for such information loss lies in contraction effects caused by noise, hardware structure, and exponentially large operator algebras dimensionality. But understanding this process allows for ways out of this situation. The search can involve finding a way to simulate a part of the quantum system using classical computers or engineering dissipation to confine training information locally. It means designing scalable systems with expressibility and trainability properties. Solving this problem becomes critical in making quantum computing viable.

References

- Schumann, M., Wilhelm, F. K., & Ciani, A. (2024). Emergence of noise-induced barren plateaus in arbitrary layered noise models. Quantum Science and Technology, 9(4), 045019. https://doi.org/10.1088/2058-9565/ad6285

- Goyal, S., Singh, S. K., Kumar, S., Sarin, S., Gupta, B. B., Arya, V., & Chui, K. T. (2024). Hyperdimensional Consumer Pattern Analysis with Quantum Neural Architectures using Non-Hermitian Operators. Proceedings of the 5th International Conference on Information Management & Machine Intelligence, ICIMMI ’23, 1–5. https://doi.org/10.1145/3647444.3652458

- Fu, Q., Liu, J., Wang, X., & Xiong, R. (2026). Machine Learning the Decoherence Property of Superconducting and Semiconductor Quantum Devices from Graph Connectivity. Entropy, 28(1), 89. https://doi.org/10.3390/e28010089

- Cunningham, J., & Zhuang, J. (2025). Investigating and mitigating barren plateaus in variational quantum circuits: A survey. Quantum Information Processing, 24(2), 48. https://doi.org/10.1007/s11128-025-04665-1

- Wang, S., Fontana, E., Cerezo, M., Sharma, K., Sone, A., Cincio, L., & Coles, P. J. (2021). Noise-induced barren plateaus in variational quantum algorithms. Nature Communications, 12(1), 6961. https://doi.org/10.1038/s41467-021-27045-6

- Bansal, A., Kumar, S., Singh, S. K., Arya, V., & Chui, K. T. (2026). On-Chip Intelligence for Real-Time Threat Monitoring. In AI-Driven Hardware Security: Architectures, Chips, and Trust (pp. 113–140). IGI Global Scientific Publishing. https://doi.org/10.4018/979-8-3373-8187-9.ch004

- Ragone, M., Bakalov, B. N., Sauvage, F., Kemper, A. F., Ortiz Marrero, C., Larocca, M., & Cerezo, M. (2024). A Lie algebraic theory of barren plateaus for deep parameterized quantum circuits. Nature Communications, 15(1), 7172. https://doi.org/10.1038/s41467-024-49909-3

- Singh, M., Singh, S. K., Kumar, S., Preet, M., Arya, V., & Gupta, B. B. (2024). Quantum-Resilient Cryptographic Primitives: An Innovative Modular Hash Learning Algorithm to Enhanced Security in the Quantum Era. In Review. https://doi.org/10.21203/rs.3.rs-4052058/v1

- Cerezo, M., Larocca, M., García-Martín, D., Diaz, N. L., Braccia, P., Fontana, E., Rudolph, M. S., Bermejo, P., Ijaz, A., Thanasilp, S., Anschuetz, E. R., & Holmes, Z. (2025). Does provable absence of barren plateaus imply classical simulability? Nature Communications, 16, 7907. https://doi.org/10.1038/s41467-025-63099-6

- Sannia, A., Tacchino, F., Tavernelli, I., Giorgi, G. L., & Zambrini, R. (2024). Engineered dissipation to mitigate barren plateaus. Npj Quantum Information, 10(1), 81. https://doi.org/10.1038/s41534-024-00875-0

- Tang, V., Choy, K. L., Ho, G. T. S., Lam, H. Y., & Tsang, Y. P. (2019), “An IoMT-based geriatric care management system for achieving smart health in nursing homes”, Industrial Management & Data Systems, vol. 119 no. 8, pp. 1819-1840.

- Tang, V., Siu, P.K.Y., Choy, K.L., Lam, H.Y., Ho, G.T.S., Lee, C.K.M., & Tsang, Y.P. (2019), “An adaptive clinical decision support system for serving the elderly with chronic diseases in healthcare industry”, Expert Systems.

- Tang, V., Siu, P.K., Choy, K.L., Ho, G.T.S., Lam, H.Y., & Tsang, Y.P. (2018), “A web mining-based case adaptation model for quality assurance of pharmaceutical warehouses”, International Journal of Logistics Research and Applications, 22(4), 325-348.

Cite As

Singh A.P. (2026) Why Deep Quantum Circuits Forget Their Own History – And Why That’s a Crisis, Insights2Techinfo, pp.1