By: K. Yadav, B. B. Gupta ![]()

![]() , D. Peraković

, D. Peraković ![]()

In recent times we can see a large amount of research being done where researchers have utilized different machine learning algorithms to protect future cyber-threats. But with time, hackers are being sophisticated and are using the same techniques to create cyber attacks. Cyber Attacks with Machine learning in some instances are much more disastrous than human-crafted attacks. Phishing, Data Poisoning, and Bruteforce are some of the common cyber attacks that can be created with the help of machine learning algorithms.

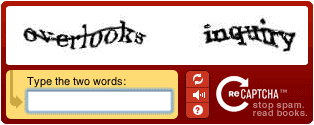

Bypassing CAPTCHA

Captchas are present inside web applications to prevent malicious parties from performing brute force or mass-signing up for malicious purposes [1]. Captcha involves solving small puzzles of text or images, which a robot may find difficult. With the advancement of deep learning algorithms like CNN, these puzzles are being solved easily by machines and can bypass captchas. Authors at [2] have utilized a semi-supervised CNN-based approach along with the transfer learning to break captcha. They have achieved 80% accuracy after six fine-tuning steps for a five-digit text-based CAPTCHA system. Authors at [3] have identified that recognizing CAPTCHA by doing preprocessing, segmentation is a lengthy process. Any fault in prior steps degrades the performance of CAPTCHA recognizers. To solve such problems, they have synthesized two datasets and trained a CNN model. They have achieved an accuracy of 86.5% and 83.3% on datasets containing easy and complex CAPTCHAs.

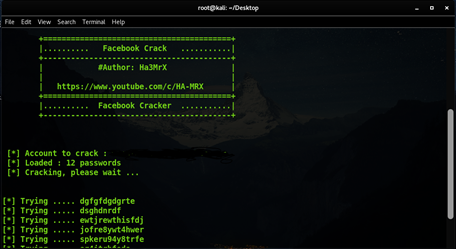

Password Bruteforce

Brute Force approach to crack passwords has been used for a very long time in a wireless network and login pages. People generally use the password related to their phone number, pet name, family name, etc. John the Ripper was the tool developed which takes into account several such parameters and generates passwords which then can be used to carry out brute force attacks. The development of machine learning algorithms can draw some complex relationships between these parameters and generate a password that is more likely to be accurate than a human-crafted password. Authors at [4] have used Torch-rnn to generate a password, and their experimental result suggests that the password generated with an AI method has a significantly higher success rate than non-AI methods.

Sophisticated Malware

These days hackers are using machine learning to create malware that can evade the currently deployed ML model for malware detection. Authors at [5] have suggested that a ML model can be trained with the help of reinforcement learning. The trained model constantly interacts with the existing malware, and as an action, learns from specific patterns in the forms of features. Such features then can be used to change the structure of the malware. Such modified malware has a tendency to evade anti-malware engines. The authors in the given experiment have achieved an accuracy of 75% in evading these anti-malware engines.

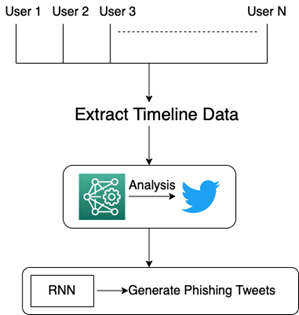

Advanced Phishing

Phishing generally means sending malicious links to the targeted group of users. The link, when clicked, gets redirected to the desired site from where credentials can be stolen. The success rate of phishing depends upon the tendency of the users to click on that link. Hackers or scammers generally try to send luring emails which then convince users to convince the people. However, these days users are aware of such lucrative emails and consider them phishing. Here machine learning can be used to send more lucrative emails which may sound genuine. A machine learning algorithm is used to analyze the past history of a user; on the basis of various parameters of a person’s history, an email is crafted, which seems more genuine than manually crafted emails.

Authors at [6] have used RNN based techniques to tweet phishing posts targeted towards specific users. The content of the tweet is generated by analyzing the topics extracted from the timeline of specific user groups. They have used clustering methods to identify the group of users who are on a high target of these generated tweets. They have measured the success by tracking click-rates of IP-tracked links. Figure 3 illustrates the whole process.

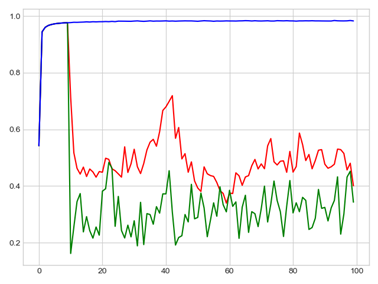

Data Poisoning Attacks

Data poisoning attack is one of the most widely followed attacks at the edge devices in a collaborative machine learning process to degrade the efficiency of a machine learning model. In previous times, people used to craft poisonous labels or features vectors manually by studying some relationship between the poisonous data and the efficiency of the model. We at our research lab recently studied this phenomenon and used machine learning to infer a more accurate relationship between poisonous data and its effect on model efficiency. We then used several parameters from the relationship established and fed them into a machine learning model to generate poisonous labels. When we studied accuracy drop as the training proceeded, we found out that the generated poisoned labels from the ML approach significantly degrades the model accuracy. Figure 4 represents the results we obtained. The blue curve denotes the accuracy of a model without any data poisoning attack, the red curve denotes the data poisoning attack due to human-crafted labels, and the green curve denotes the attack with ML-crafted labels.

References:

- Dhananjay Singh (2021) Captcha Improvement: Security from DDoS Attack, Insights2Techinfo, pp.1

- Aiken, William, and Hyoungshick Kim. “POSTER: DeepCRACk: Using deep learning to automatically crack audio CAPTCHAs.” Proceedings of the 2018 on Asia Conference on Computer and Communications Security. 2018.

- Arain, Rafaqat Hussain, et al. “A deep learning model for recognition of complex Text-based CAPTCHAs.” IJCSNS18.2018 (2018): 103.

- Trieu, Khoa, and Yi Yang. “Artificial intelligence-based password brute force attacks.” Proc. MWAIS 39 (2018).

- Fang, Zhiyang, et al. “Evading anti-malware engines with deep reinforcement learning.” IEEE Access 7 (2019): 48867-48879.

- https://www.blackhat.com/docs/us-16/materials/us-16-Seymour-Tully-Weaponizing-Data-Science-For-Social-Engineering-Automated-E2E-Spear-Phishing-On-Twitter-wp.pdf

Cite this article as:

K. Yadav, B. B. Gupta, D. Peraković (2022), Developing Cyber-Attacks with Machine Learning, Insights2Techinfo, pp.1