By: C S Nakul Kalyan, Asia University

Abstract

Due to advancements in the digital world, technologies such as Artificial Intelligence (AI) and deepfakes have evolved significantly. Although there are good uses for these technologies, on the other hand, they also pose a greater threat in digital media of misusing them. One of the threats is scene and background manipulation in deepfake videos, which will go beyond the traditional green screen techniques. To minimize this act, the role of background removal using the U2-Net-based segmentation is used. The study on platform-based visual effects (VFX) states how deepfakes work within the online media and how crucial the effect of lightning, framing, and audience reception is on the implications of deepfakes needed in online media. To overcome these types of risks, active lightning is used as a detection strategy, which uses dynamic hue projection, in which it can separate real from manipulated users. in this study, we will go through an integrated methodology for background removal, scene-aware VFX, and to do real-time authentication. The main goal of this study is to improve visual fidelity, and security from deepfake systems.

Keywords

Deepfake Detection, Background removal, Scene manipulations, Real-time authentication, and visual fidelity.

Introduction

By using the advanced features of deepfake technology, we can create a sim- ple face swap to an advanced level of live scene and background manipulations. The traditional detection approaches are being replaced by the deep-based back- ground detection techniques in which improve the visual quality and reduce the boundary artifacts, and will easily detect [3][4]. The study from platform-based visual effects (VFX) will be showing the importance of lighting and framing in the creation of realistic manipulations [1]. By using active lighting, we can easily detect the inconsistencies in the data, which can be used as a real-time detection strategy, mainly during live video conferences [2][7]. In this article, we will go through the proposed integrated methodology, which will be used to improve the realism and security of using deepfake scene modification.

Proposed Methodology

To detect against Scene and background manipulation in deepfakes videos, a multilayered framework has been proposed, which contains computer vision, visual effects (VFX) practices, and real-time authentication strategies, which are explained below:

dataset construction and Pre-processing

Video Sources

The datasets have been collected from publicly available repositories such as VoxCeleb and YouTube-based content [1]. The videos in the datasets include interviews, staged cinematic Scenes, and manipulated deepfake media which it ensuring that the datasets contain both real-world and manipulated content.

Annotation Process

The Annotation process will be done by both humans and automated tools, in which they segregate the content based on:

Foreground/Background Segmentation:

This segmentation will be done based on the Salient subject and the video containing different environments.

Manipulation Type:

This segmentation will be done based on the manipulation type that is used on the videos, such as face-swap, background replacement, and composite VFX [4][6].

Scene context:

This segregation will be done based on the Scene content, such as the Entertainment scene, remake scene, or misinformation [5].

Foreground Extraction and Background Removal

u2-Net Architecture

The U2-Net Architecture will separate the foreground samples by using the fine- tuned segmentation model [3]. By using this architecture increases the accuracy in the region of the boundary, such as hair, chin, jawline, etc.

Scene Recomposition

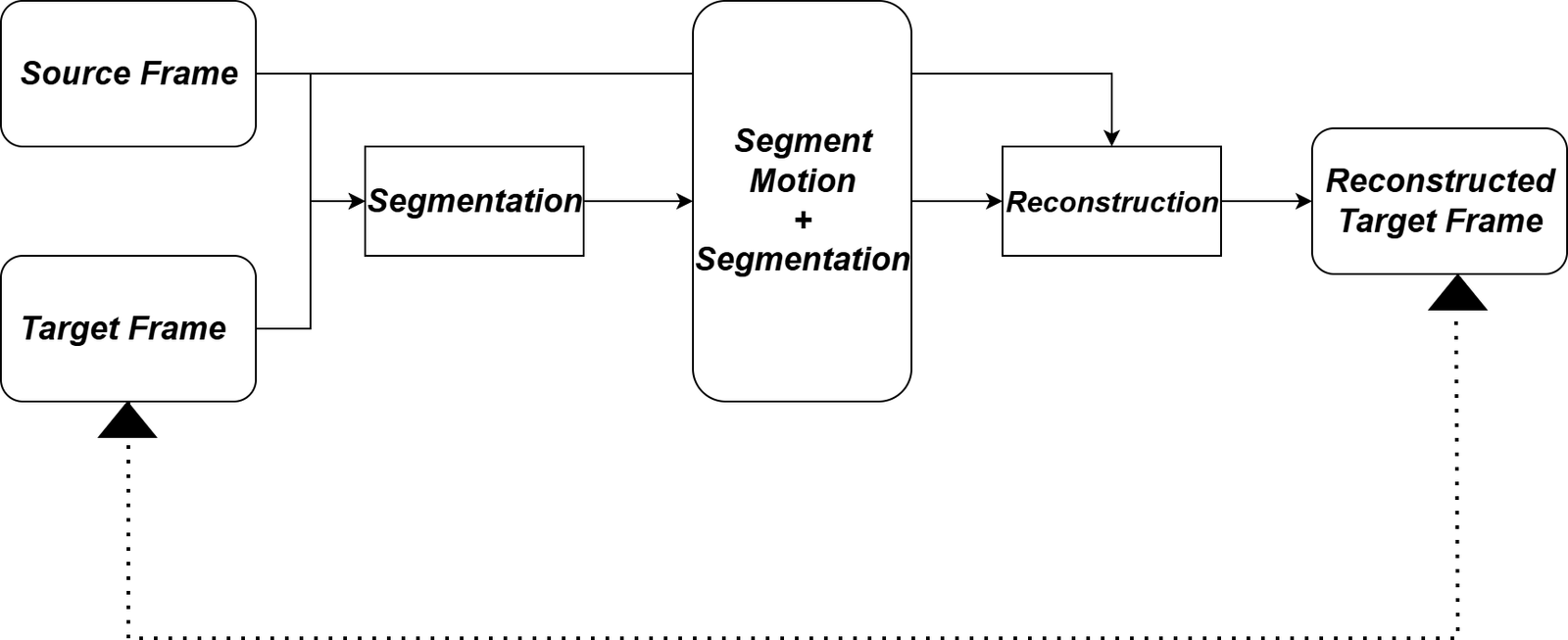

The scene recomposition will be used to combine the extracted topics with the newly generated backgrounds. This step reduces the multiple borders and edge distortions when combining the subjects with the new environments [3][4]. The Motion Co-segmentation Approach Architecture is Illustrated in Figure 1 below,

Scene-Aware VFX Integration

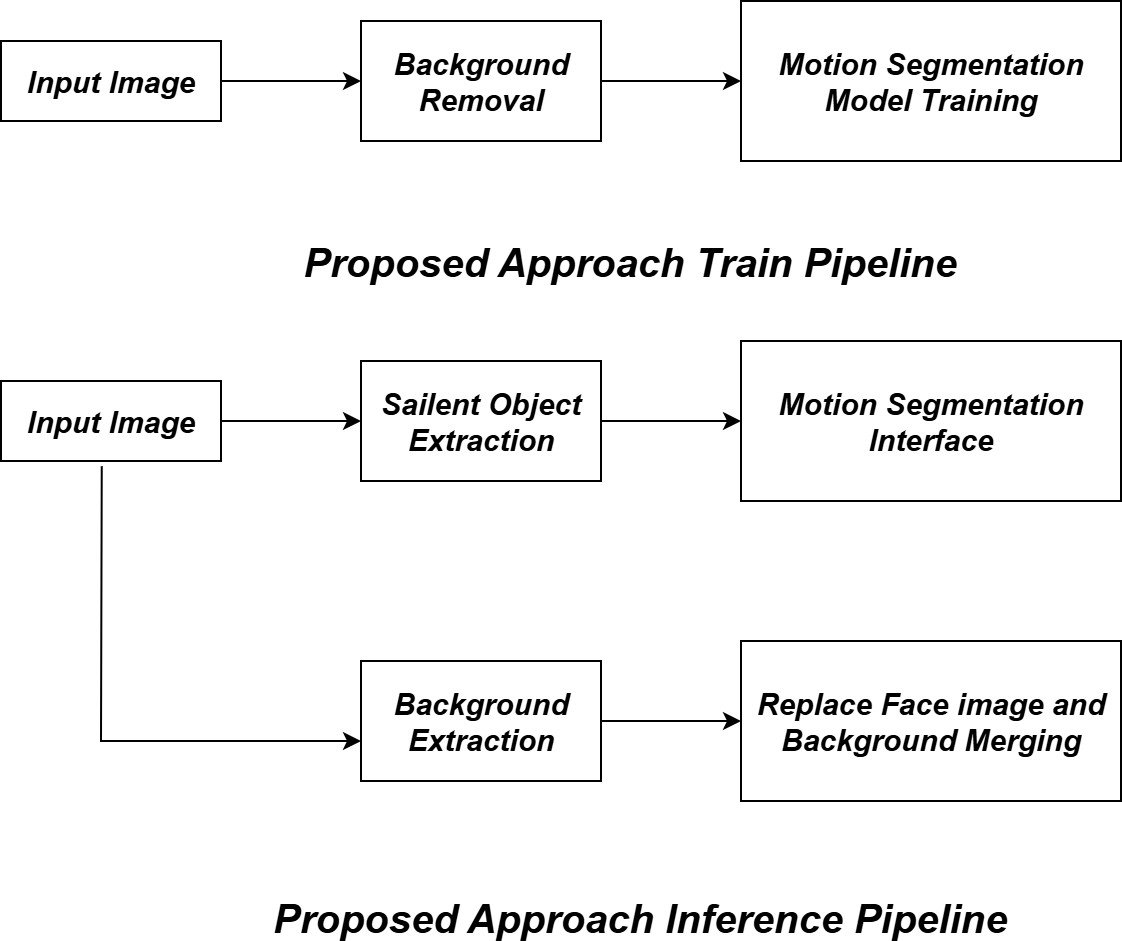

The proposed approach training and inference pipeline is shown in Figure 2 below.

Platform VFX Practices

This process is done by referring to YouTube-based VFX techniques [1][6], where the manipulated subjects are merged into the backgrounds through:

Lightning Consistency:

Lightning consistency will be used to match the ambient and direct light between the source and target scenes.

Resolution and Framing:

This process is done to eliminate the mismatching discrepancies, which ensures that the scale and pixel density match [1].

Contextual embedding:

This is a technique in which it will place the manipulated or altered contents in recognizable environments, such as Social media and cinematic settings, to increase the realism of the content [1][6].

Deepfake Synthesis Optimization

Generative Models

Here, the Autoencoders and GAN-based algorithms have been used to align the face expressions, lip movements, and head positions, where the motion segmentation model will be used to align the video-based operations [6].

Error Minimization

The error minimization will be focused on Border artifacts such as hairline disorders and double edges, Lightning mismatch across the scene pieces, and it reduces the compression-included glitches during recompositing [4].

Real-time Authentication Via Active illumination

Dynamic Hue Projection

During the live video interactions, a temporally varying hue pattern will be projected onto the participant’s face using the device display [2][7], in which it will detect if any background manipulation is being used.

Verification Process

After projecting the Hue light, the Real participants naturally reflect the color changes in real time, where we can identify the participants who are attending without using any manipulations in background change. The users with manipulated subjects, fails to reflect the light pattern, or they won’t produce any temporal delays, which can be identified using the correlation analysis [7].

Evaluation Metrics

The Evaluation process has been done by using the:

Visual Realism:

The visual realism will provide the Mean Opinion Score (MOS) from the human evaluators for recomposed background realism.

Artifact Reduction:

The artifact reduction will provide a quantitative assessment of border arti- facts, such as the Pixel overlap error rate [4].

Detection Accuracy:

For Active illumination verification, the detection accuracy provides the Precision, recall, and F1-score [2].

Contextual Believability:

To provide trust among other people, a qualitative assessment based on the viewer response, which is collected from various platforms such as YouTube, conferencing applications, etc.[1]. The Performance evaluation metrics across different detection methods have been specified in Table 1 below:

Table 1: Performance evaluation metrics across different methods

Detection Method | Precision | Recall | F1-Score | Visual Realism | Artifact Reduction |

Green Screen Detection | 72.3 | 68.5 | 70.3 | 3.2/5.0 | 45.2 |

u2-Net Background Segmentation | 89.7 | 85.3 | 87.4 | 4.1/5.0 | 78.6 |

Active illumination (static) | 91.2 | 88.7 | 89.9 | 3.8/5.0 | 52.3 |

Dynamic Hue Projection | 94.8 | 92.1 | 93.4 | 4.3/5.0 | 65.7 |

Proposed Method | 96.5 | 94.8 | 95.6 | 4.6/5.0 | 85.2 |

Platform VFX+Active Illumination | 93.7 | 90.4 | 92.0 | 4.4/5.0 | 81.9 |

Conclusion

The Scene and Background manipulations is an advancement in emerging deep- fake generation, where it went beyond the traditional green-screen techniques. This article combines background removal to reduce the artifacts, such as plat- form VFX for scene integration, and active illumination for real-time detection, which can detect the realistic and protected deepfake applications. These integrated approaches will be used to improve the visual fidelity, as well as strengthen the detection methods, which provide a balance between digital media innovation and prevent the misuse of these advanced technologies.

References

- Lisa Bode. Deepfaking keanu: Youtube deepfakes, platform visual effects, and the complexity of reception. Convergence, 27(4):919–934, 2021.

- Candice R Gerstner and Hany Farid. Detecting real-time deep-fake videos using active illumination. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pages 53–60, 2022.

- Andrey Kuznetsov. A new deep fake method based on background removal. In 2021 International Conference on Information Technology and Nanotech- nology (ITNT), pages 1–4. IEEE, 2021.

- Falko Matern, Christian Riess, and Marc Stamminger. Exploiting visual artifacts to expose deepfakes and face manipulations. In 2019 IEEE Win- ter Applications of Computer Vision Workshops (WACVW), pages 83–92. IEEE, 2019.

- Kristof Meding and Christoph Sorge. What constitutes a deep fake? the blurry line between legitimate processing and manipulation under the eu ai act. In Proceedings of the 2025 Symposium on Computer Science and Law, pages 152–159, 2025.

- Sonu Mittal, Mukesh Joshi, Prashant Vats, Govind Murari Upadhayay, Shailender Kumar Vats, and Shalendra Kumar. Virtual illusions: Unleash- ing deepfake expertise for enhanced visual effects in film production. In 2024 11th International Conference on Reliability, Infocom Technologies and Op- timization (Trends and Future Directions)(ICRITO), pages 1–6. IEEE, 2024.

- Zhixin Xie and Jun Luo. Shaking the fake: Detecting deepfake videos in real time via active probes. arXiv preprint arXiv:2409.10889, 2024.

- Arya, V., Gaurav, A., Gupta, B. B., Hsu, C. H., & Baghban, H. (2022, December). Detection of malicious node in vanets using digital twin. In International Conference on Big Data Intelligence and Computing (pp. 204-212). Singapore: Springer Nature Singapore.

- Lu, Y., Guo, Y., Liu, R. W., Chui, K. T., & Gupta, B. B. (2022). GradDT: Gradient-guided despeckling transformer for industrial imaging sensors. IEEE Transactions on Industrial Informatics, 19(2), 2238-2248.

- Sedik, A., Maleh, Y., El Banby, G. M., Khalaf, A. A., Abd El-Samie, F. E., Gupta, B. B., … & Abd El-Latif, A. A. (2022). AI-enabled digital forgery analysis and crucial interactions monitoring in smart communities. Technological Forecasting and Social Change, 177, 121555.

- Agrawal, D. P., Gupta, B. B., Yamaguchi, S., & Psannis, K. E. (2018). Recent Advances in Mobile Cloud Computing. Wireless Communications and Mobile Computing, 2018.

Cite As

Kalyan C S N (2025) Scene and Background Manipulation in Deepfake Videos: Beyond Green Screens, Insights2Techinfo, pp.1